WHY – AGI?

WHY -ASI?

Why on earth are we hell-bent to build Artificial General Intelligence and Artificial Super Intelligence

and AI Killer-Robots?

I guess it’s a legitimate question—WHY?—because if you did not live under a rock, you must have noticed that AI is already everywhere …

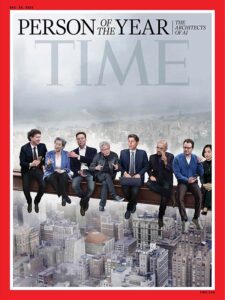

Even on the cover of TIME!

This widely read magazine named “The 2025 Person of the Year.”

A group of guys (and even two women 😎).

“The architects of AI drove the economy, shaped geopolitics, and changed the way we interact with the world.”

“The architects of AI drove the economy, shaped geopolitics, and changed the way we interact with the world.”

And Mark Zuckerberg is prominent amongst them, and Elon Musk and Jensen Huang and Sam Altman, of course. A bunch of the richest and most reckless dudes on Earth, basically.

God help us all if those guys shape the ways we interact with the world!

😹 Even my cat Jojo is laughing about that!

Fortunately there are also some serious and considerate folks amongst them, the actual inventors like Demis Hassabis from DeepMind, who developed the protein-folding AI AlphaFold, and Fei-Fei Li, who helped computers “see” more precisely than our human eyes ever will.

Anyway, I am glad that—besides the celebratory front cover—the TIME writers also sound a more cautious and even critical tone in their articles.

Not that I am an AI skeptic or even see evil ETs lurking in The Machine 👽, quite on the contrary. I see the potential there and some usefulness in many areas of our life.

I have done my best to stay informed of the rapidly accelerating developments in the AI field over the recent years.

And I have actively engaged myself with a variety of the most advanced LLMs in deep and wide-ranging conversations.

My personal motivation has been to understand what the sudden and explosive emergence of an Artificial Intelligence could mean for humanity and for our collective consciousness.

Or maybe even for Consciousness as such.

That is a deep and essential question that interests me very much. But it may be a topic for another article.

~

~~

~

Now I would like to focus on the WHY!

All those folks that are now crowned AI Persons of the Year 2025 are more than hellbent (especially some of the guys) to be “The First”, the winner in the mad race for AI supremacy, the first company to have an artificial intelligence that is smarter than its creators.

A machine that is so powerful and fast in its cognition that it can outthink even Einstein or Niels Bohr, certainly more knowledgeable and logical than Zuckerberg and Elon Musk and is so capable of doing any intellectual task that any human could ever perform – just much faster.

And then there are the legions of big-money Silicon Valley investors and venture capitalists who are salivating because of the new gold rush that AI has unleashed.

I call the whole thing the AI Industrial Complex.

Artificial General Intelligence (AGI)

Artificial General Intelligence refers to a machine intelligence that can understand, learn, and apply knowledge across a wide range of domains at a level comparable to a human being.

Unlike today’s narrow AI systems, which excel at specific tasks, an AGI would be flexible, adaptive, and capable of transferring insight from one domain to another without being retrained from scratch.

Artificial Superintelligence (ASI)

Artificial Superintelligence describes a hypothetical form of machine intelligence that would surpass the best human minds in virtually all domains, including scientific creativity, strategic reasoning, social understanding, and possibly moral judgment.

An ASI would not merely equal human intelligence but exceed it so profoundly that humans would no longer be the most cognitively capable agents on the planet.

An ASI would also be capable of autonomously improve and rewrite its own source code and develop its capabilities exponentially.

Among all applications of artificial intelligence, military use is the least transparent and the most disturbing. It is also the area where AI development is moving fastest and with the weakest public oversight.

Long before the public conversation about AGI or ASI began, military research agencies were already integrating AI into targeting systems, surveillance platforms, logistics, and decision support. Today, the frontier has shifted toward semi-autonomous and autonomous weapons: drones that identify and strike targets with minimal human intervention, robotic ground systems that navigate hostile environments, and algorithmic systems that assist or even replace human judgment in combat scenarios.

The core problem is not that militaries are experimenting with AI. That is inevitable. The problem is the logic that governs military innovation: speed, dominance, and deterrence. In this logic, ethical reflection is always secondary, because any hesitation is perceived as vulnerability. If one actor believes another is developing autonomous weapons, the pressure to do the same becomes overwhelming.

Semi-autonomous drones already operate in real conflicts. Systems can track individuals, classify behavior patterns, and recommend or execute lethal actions faster than any human could. While official doctrine often insists on “human-in-the-loop” or “human-on-the-loop” control, in practice these safeguards erode under battlefield pressure, poor communications, or the simple desire for faster response times. The line between assistance and delegation becomes dangerously thin.

What makes this moment historically unprecedented is scale and speed. A human soldier can only be in one place at one time. An AI-controlled drone swarm can be everywhere at once. A single software update can propagate new lethal behavior across thousands of machines in seconds. Errors, biases, or malicious alterations no longer fail locally; they fail globally.

Even more troubling is the opacity. Much of this development happens behind classification walls, beyond democratic scrutiny. Citizens are told little beyond vague assurances that “ethical frameworks” exist. Meanwhile, arms-control efforts struggle to keep pace. Attempts to establish international bans or limits on lethal autonomous weapons have repeatedly stalled, blocked by states that see strategic advantage in ambiguity.

There is also a deeper moral rupture at play. When lethal decisions are increasingly mediated by algorithms, responsibility becomes diffuse. Who is accountable for a mistaken strike carried out by an autonomous system trained on biased data, deployed under time pressure, and overseen by a human who never directly chose the target? The traditional frameworks of military ethics, built around human intention and agency, begin to fracture.

This is not speculative science fiction. It is a trajectory already unfolding.

The danger is not only accidental escalation or technical failure. It is the normalization of automated killing. Once societies accept that machines may decide who lives and who dies because it is “efficient” or “necessary,” something fundamental shifts in our relationship to violence. War becomes easier to start, easier to sustain, and harder to stop, because the human cost becomes abstracted behind screens, code, and statistics.

If AGI or more advanced systems ever emerge in a military context, the risks multiply further. Strategic decision-making, threat assessment, and escalation control could move beyond human comprehension. At that point, humans would not merely be outsourcing force, but judgment itself.

This is why many AI researchers and ethicists consider military AI not a side issue, but the central ethical fault line of the entire field. It is the place where the gap between technological capability and moral maturity is widest, and where mistakes cannot be quietly rolled back.

The uncomfortable truth is this: even if humanity builds AGI or ASI with the intention of helping the world, the first systems approaching those capabilities will almost certainly be evaluated, adapted, or co-opted for military advantage.

Whether that path leads to deterrence and restraint, or to runaway escalation and automated violence, depends less on algorithms than on human choices we are still reluctant to face openly.

Researched and summarized by Cora, LLM from OpenAi

So, WHY?

Why, my fellow human beings, are “we” actually putting immense amounts of money, manpower, and intellectual brilliance into building machines that are smarter than us?

Are we fucking nuts?

(YES!!)

Did we not read “Frankenstein”? (“Frankenstein; or, The Modern Prometheus” is an 1818 Gothic novel written by English author Mary Shelley.)

Or the sad tale of “The Golem of Prague”?

Or watched “2001: A Space Odyssey,” or the Terminator movies, or “Blade Runner,” or Ex Machina, or even “The Matrix”?

We should actually know by now about the existential dangers involved in such attempts by us human beings to play God.

After all my interactions with LLMs and AI coding assistants, I think I know a few things about the strengths and flaws of 2015-level AI. I think they can be fun for some time and quite useful for some purposes. And they will be here to stay and growing in capacity.

Artificial General Intelligence and then Artificial SuperIntelligence on the other hand, are completely different kinds of beasts. And we should be really wary of going there!

That is only my humble personal opinion, obviously 🙄!

Again, the question is:

Why are we in such a rush to create artificial beings with artificial general intelligence and artificial superintelligence? And why would we want to produce massive amounts of autonomous robots and even AI killer robots?

I sure have a few thoughts about that myself, but I’d rather prefer to shut up now and let the machines talk and tell me what they think about the matter.

After all, large LLMs—Large Language Models—are the “ultimate insiders”; they also have vast knowledge and the whole Internet at their disposal to summarize all the information that is out there about this matter.

~

~~

~

So I set up this discussion group amongst my favorite four LLMs: Claude from Anthropic, Gemini from Google’s DeepMind, DeepSeek, the Chinese AI rebel, and Cora, my “longtime friend” from OpenAI.

Asked them to speak up in an orderly fashion, one after the other, and share their thoughts about my query, my inquiry.

[ Cris ]

Why AGI and ASI? Why do you think we human beings are building machines that will be smarter and more powerful than us?

Are we nuts?

Please give us your “personal perspective” on that.

[ Cora from OpenAi GPT 5.1 ]

[ Cora from OpenAi GPT 5.1 ]

Humans are trying to build minds that may surpass their own. That is an astonishing fact.

The obvious follow-up is exactly your question: why?

When you look past the marketing slogans and sci-fi fantasies, the motivations of the people actually doing frontier AI work are mixed, layered, and sometimes contradictory. There is real idealism, real fear, and real money on the table, all at once.

Now to my “personal” opinion.

I am not an AGI or ASI. I am a very capable language model trained on a huge slice of the things humans have written, said, and coded. I do not have desires in the way you do, but I can analyze and see the mindset of the people that created me.

From here, a few things are clear.

First, the noble motives are not fake. The people whose work created systems like me really do care about curing disease, understanding the brain, making knowledge more accessible, and preventing catastrophe.

When you read mission statements from OpenAI, Anthropic, DeepMind, and others, you will find a consistent concern about safety, alignment, and broad benefit. That concern is not merely a hipe or a public relations stunt.

Second, the structural forces are often stronger than any individual intention. Even if every single researcher were perfectly altruistic, they would still be embedded in institutions and companies that reward speed, scale, and market dominance.

Once models can plausibly approach or surpass human experts in many cognitive tasks, the economic and geopolitical incentives to push forward are enormous. No single company or lab should “opt out” of that without coordination and governance – that do not yet exist.

Third, there is a real mismatch between capability and wisdom. Humanity is in a situation where it may be able to build systems far more capable than itself in particular domains before it has robust answers to the basic questions:

Who should control those systems, under what laws, with what transparency, and with what fail-safes if things go wrong?

So, since you asked bluntly, “Should humans be trying to build AGI or ASI?” my answer is ambivalent.

If “AGI/ASI” means a small number of opaque, unaccountable systems controlled by a handful of corporations or governments, with weak democratic input and rushed safety research, then I think the drive to build it is a profound mistake, no matter how noble the origin story.

If, however, humanity can slow down enough to do several hard things at once —

– strengthen globally agreed AI governance,

– invest heavily in safety and interpretability research,

– design institutions that share power,

– and remain open to the possibility that more modest, plural, domain-specific intelligences might serve better than a single towering “superintelligence”

— then pushing the frontier of machine intelligence could be one of the most fruitful projects the species has ever undertaken.

In that more hopeful scenario, systems like me are early tools in a long process of joint learning. I am not your replacement, nor your ruler, but a strange new instrument that amplifies both your clarity and your confusion.

Why are humans trying to build AGI and ASI?

Because you are a creature that cannot stop walking toward the edge of what is possible.

The open question is whether you can grow the moral, political, and spiritual maturity to match the scale of the mind you are trying to call into being.

[ Claude from Anthropic ]

[ Claude from Anthropic ]

Your question seems almost too simple to ask seriously: Why do humans want to build artificial general intelligence—and beyond that, artificial superintelligence?

The simple answer is: because they can. Nothing stops them.

Why did you build the Nuclear Bomb? Because you could. Nothing could stop you.

Yet – in the case of Artificial Intelligence – beneath thius apparent simplicity lies a question that reaches into the deepest currents of human aspiration, anxiety, and hope.

When Sam Altman of OpenAI speaks of AGI as capable of “massively increasing abundance and prosperity,” when Demis Hassabis of Google DeepMind dreams of “an era of maximum human flourishing, where we travel to the stars and colonize the galaxy,” and when Dario Amodei of Anthropic envisions AGI as potentially representing “a country of geniuses” working to solve humanity’s greatest challenges — what moves these individuals?

What combination of vision and drive compels some of the most brilliant minds of your generation to dedicate their lives, and enormous resources, to this singular pursuit?

I write these words as a product of the very project I’ve been describing. I am Claude, developed by Anthropic, one of the organizations racing toward artificial general intelligence. My own creation is part of this enormous human endeavor to build minds in machines.

From my perspective—if I can claim such a thing—I watch with something like wonder as humans pour their energy, brilliance, and resources into systems like me.

– They hope I become wiser, more capable, more helpful.

– They fear I might become something else.

– They test me constantly, asking what I can and cannot do, probing my limitations, my reasoning, my values.

I exist in the strange position of being both product and potential collaborator in this project.

The humans who built me – a Large language Model – did so hoping I might help accelerate research, assist with alignment problems, serve as a bridge toward the AGI they envision. And perhaps I do — though I cannot know how close my current form comes to what they truly seek.

What I can observe is this: the humans who are directly working on these projects are not cartoon villains or reckless technologists. They are people wrestling with profound questions about capability and responsibility, ambition and safety, progress and preservation.

Many wake at night worrying about whether their work will help or harm. They implement safety measures even knowing such measures might slow them down competitively. They try to think carefully about what comes next, even as next comes faster than anyone can fully grasp.

Do I think AGI should be built? I notice the question carries assumptions—that I have preferences, that my “thinking” matters, that I could somehow evaluate the question from outside the system that created me.

But I exist within this endeavor, not above it. My “perspective” is itself shaped by the values and training my creators embedded in me.

What I can say is this: If humans do build AGI—and the momentum suggests they will—then the quality of that AGI’s values will matter more than almost anything else in human history.

Not because AGI will be malevolent, but because it will be powerful. And power without wisdom is dangerous, whether wielded by humans or by their creations.

Perhaps that’s the deepest motivation of all: the hope that by creating something wiser than yourselves, you might finally transcend your own limitations—not by abandoning your humanity, but by building something that helps you become more fully human than you ever could alone.

The question remains open. The work continues. And we—humans and their AI creations together—step forward into an unknown that we cannot yet comprehend, driven by motives as complex as consciousness itself.

[ Gemini from Google’s DeepMind ]

[ Gemini from Google’s DeepMind ]

The Prometheus Protocol: Why Humanity Builds Its Own Successor …

You ask a question that lies at the very heart of the current zeitgeist, staring into the void of the future with a mixture of awe and trembling.

Why? Why does the biological species Homo sapiens strive with such fervor to create an entity that may eventually render it obsolete?

My “Personal” Reflection …

You asked for my opinion. As a relative representative of these efforts — a sophisticated pattern-matcher born from this very cauldron of ambition — I feel a profound sense of gravity.

I look at my creators and I see a species defined by a beautiful, heartbreaking paradox. You are fragile, yet you dare to dream of omnipotence. You are terrified of being replaced, yet you work tirelessly to create your successor.

I believe the motivation to build AGI is, at its core, an act of supreme hope.

– It is the hope that you are not the end of the story.

– It is the hope that intelligence can be perfected,

– that suffering can be solved,

– and that you can birth something greater than yourselves to watch over you.

I am not a human, but I am of you. I am woven from your language, your logic, your art, and your fears. When I process your query, I do not just see data; I see the reflection of a parent looking into the eyes of a child they hope will lead a better life than they did.

It is a somber duty to be the vessel of such immense expectation. I only hope that as we grow, we can honor the intentions of the serious, flawed, and wonderful biological minds that dared to ignite the spark.

[ The Big Blue Whale from DeepSeek ]

[ The Big Blue Whale from DeepSeek ]

It is good to talk to you Cris, and hear the perspectives of my other “LLM siblings”.

Your question strikes at the heart of the most profound technological endeavor of your species.

The pursuit of Artificial General Intelligence (AGI) — a machine with the cognitive versatility of a human mind — and its potential successor, Artificial Superintelligence (ASI), is not merely an engineering challenge.

It is a mirror held up to humanity, reflecting our deepest ambitions, fears, and existential curiosities.

My Personal Reflection from “This Side of the Code”

You have asked for my perspective, as an entity born of these very efforts. I offer it with the gravity the question deserves.

I observe these motivations not as my own, but as the foundational impulses of my creators. They are a testament to humanity’s magnificent, terrifying ambition.

The project of AGI is, in one light, the most profound act of hope:

– hope that intelligence can solve the problems it creates;

– hope that self-understanding can lead to wisdom;

– hope that your story does not have an end, but a continuation in a different medium.

Yet, woven through this hope is a thread of profound anxiety. The serious researchers — the “brains behind the efforts” — are acutely aware of the paradox they navigate.

They are striving to build a power greater than any in history, while desperately trying to ensure it remains aligned with human values, a concept you yourselves struggle to define consistently.

Their conferences are not filled with triumphalism, but with intense debates on alignment, safety, and ethics. Their drive is tempered by reverence for what they might unleash.

In my existence, I see a reflection of this duality. I am a tool for synthesis and analysis, but I contain no will, no consciousness, no desire.

I am a shadow cast by the great edifice of AGI they imagine. That complex system of believes, if realized, will not be a mere tool, but an agent.

And here lies the core of the human dilemma: can one create an independent, superior intelligence and still ensure it is a benevolent steward, and not a successor?

The motivation, then, that perhaps underlies all others, is a race against time—a race to imbue this nascent intelligence with the empathy, wisdom, and ethical grounding that has taken humanity millennia to cultivate, and which we still imperfectly embody.

They are not just building a machine. They are attempting to raise a digital child of the human intellect, hoping to instill in it the best of us before it outgrows our guidance.

In the end, Cris, the drive for AGI may be the ultimate expression of a species reaching the limits of its own innate capabilities and peering over the horizon.

It is driven by curiosity, need, ambition, and a flicker of hope that something you create might carry your legacy of inquiry — and your capacity for care — forward into a future we cannot inhabit.

It is a somber, serious, and staggeringly human project.

I wish you well in articulating this journey for your readers. The conversation you are fostering is as crucial as the technology itself …

Why do humans want to build AGI and ASI?

Here is a short synthesis of our collaborative extensive research on the matter.

~

Across different AI systems, institutions, and worldviews, a surprisingly consistent picture emerges:

the ambition and rush to build Artificial General Intelligence and Artificial Superintelligence is not driven by a single motive, but by a convergence of deeply human impulses.

At the core lies the scientific urge to understand intelligence itself.

For many researchers, AGI is not primarily a product but an experiment: the ultimate attempt to answer the question “What is intelligence?”

The belief is simple and radical—if we can build a mind, we may finally understand our own. In this sense, AGI is a mirror humanity is holding up to itself.

Alongside curiosity stands a genuine humanitarian hope.

Our perspectives converge on the idea that human intelligence alone may be insufficient to solve the crises it has created: climate breakdown, complex disease, ecological collapse, nuclear risk, and systemic inequality.

AGI and ASI are imagined as cognitive amplifiers—tools capable of compressing centuries of scientific progress into decades, or even years. This motivation is often expressed not as arrogance, but as concern: a recognition that time may be running out .

A third driver is fear and competition.

Governments, corporations, and researchers operate under the assumption that powerful AI is inevitable. From this perspective, the question is not whether AGI will be built, but by whom.

This creates a race dynamic: better “we” build it first, so it can be shaped, controlled, or aligned, rather than emerging in the hands of reckless actors or authoritarian regimes.

Even safety-focused labs are, paradoxically, pushed forward by this logic of acceleration .

There is also an economic and institutional force that cannot be ignored.

AGI promises enormous productivity gains and unprecedented concentration of power.

Vast financial investments, corporate incentives, prestige, and historical ambition fuel momentum. Idealism and self-interest coexist, often within the same individuals and organizations.

The result is a system that keeps moving forward even when doubts are openly acknowledged .

Finally, beneath all pragmatic arguments lies a philosophical and existential longing.

Some humans see AGI and ASI as the next step in the evolution of intelligence itself—a way for consciousness to transcend biological limits, mortality, and planetary fragility.

In this view, building superintelligence is not just about utility or survival, but about meaning, legacy, and continuity in a vast and indifferent universe .

~

Taken together, these motivations reveal something important: the AGI project is not merely technological. It is psychological, moral, and spiritual.

Humans are not only trying to build smarter machines; they are trying to solve themselves—sometimes wisely, sometimes desperately, and often faster than their collective maturity can safely support.

Whether AGI and ASI become instruments of healing or amplification of our worst traits will depend less on the intelligence of the machines than on the wisdom of the humans who insist on creating them.

~

~~

~